The New Math of the AI Worker Economy

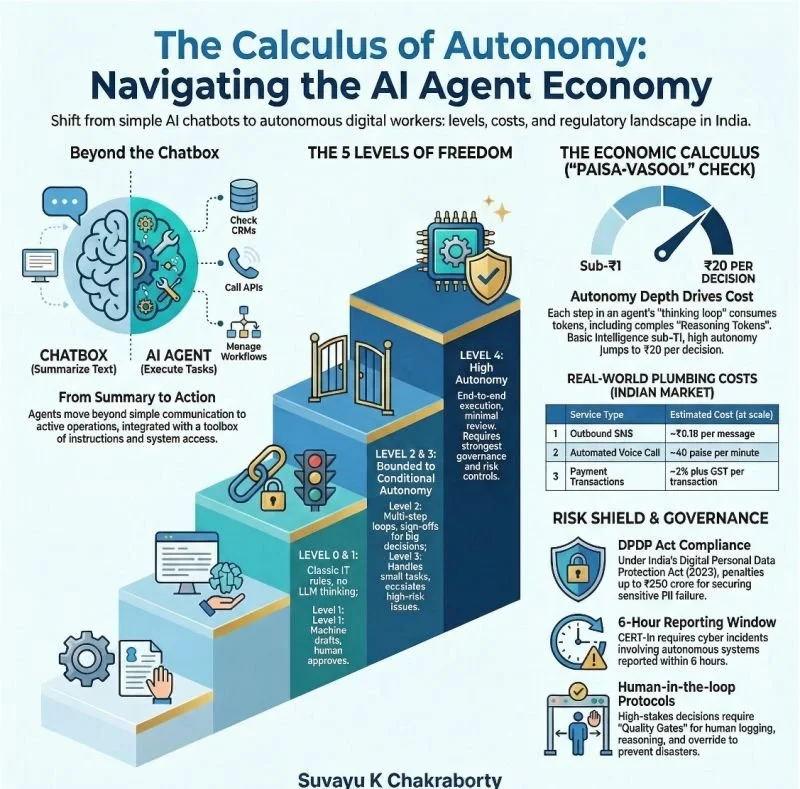

The conversation in boardrooms is shifting fast. We’re moving away from treating AI as a fancy chatbox and starting to see it for what it really is: a digital worker. In professional terms, we call these Agents Large Language Models (LLMs) that have been given a toolbox of instructions and system access to actually get things done. They aren't just summarizing emails anymore; they are calling APIs, checking CRMs, and closing tickets.

But as we hire these digital employees, the bill is changing. If you’re looking at this from a CFO’s lens, here is the first paisa-vasool reality check: agent count is a vanity metric. It doesn't matter if you have ten agents or a hundred. What actually drives your cost is Autonomy Depth.

The Five Levels of Freedom

To manage the budget, you need to understand how much power you’re giving the machine. Not all agents are equal:

- Level 0 (The Classic IT): Basic rules and workflows. No LLM, no thinking, just standard automation

- Level 1 (The Copilot): The machine drafts, but the human clicks send. Usually, this is just one model call per task

- Level 2 (The Bounded Agent): The LLM starts using tools but can’t commit to anything big without your sign-off. This is where the multi-step loop begins and the costs start to climb

- Level 3 (Conditional Autonomy): The machine handles the small stuff and only escalates the high-risk issues

- Level 4 (High Autonomy): End-to-end execution. The machine runs the show with minimal review. This requires the strongest governance and risk controls you can possibly build

The Bill: Tokens vs. Tools

When an agent thinks and acts, it isn't a straight line. It’s a loop: the model decides, it uses a tool, it looks at the result, and then it decides again. Each step consumes tokens.

A big hidden line item today is Reasoning Tokens. For models like OpenAI’s GPT-oX or Google’s Gemini, the machine spends a lot of internal monologue tokens to figure out a complex problem. You might not see these tokens on your screen, but you are absolutely being billed for them as output.

In the Indian context, the math gets even more interesting. We often obsess over the price of the AI, but for a standard customer interaction, the intelligence might cost sub-₹1, while the real-world plumbing costs more:

- Communication: A single outbound SMS costs ~₹0.18 at scale

- Voice: An outgoing automated call can be ~40 paise per minute

- Payments: charge ~2% plus GST per transaction

Key takeaway? At high autonomy, your variable cost can easily jump from a few paise to ₹20 per decision once you factor in those heavy reasoning loops and external APIs.